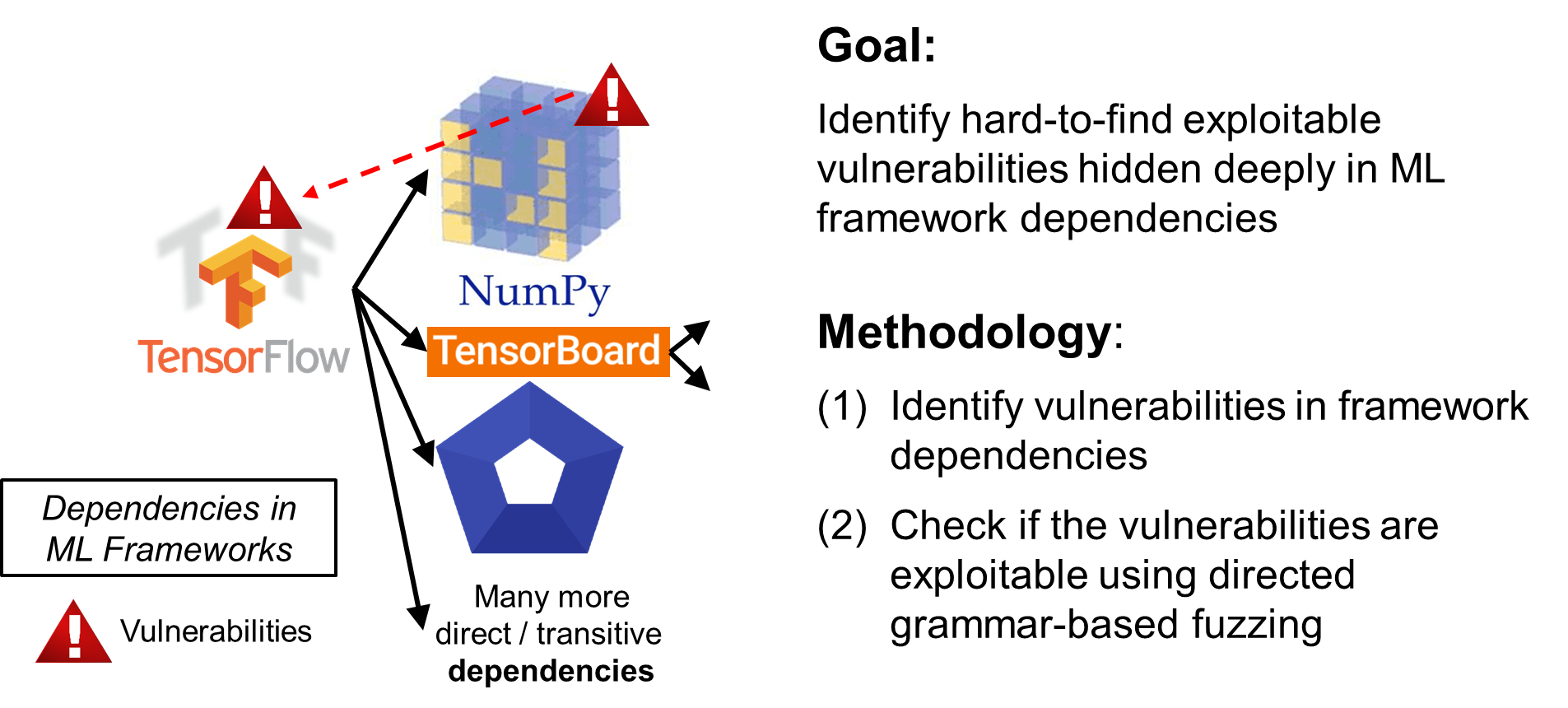

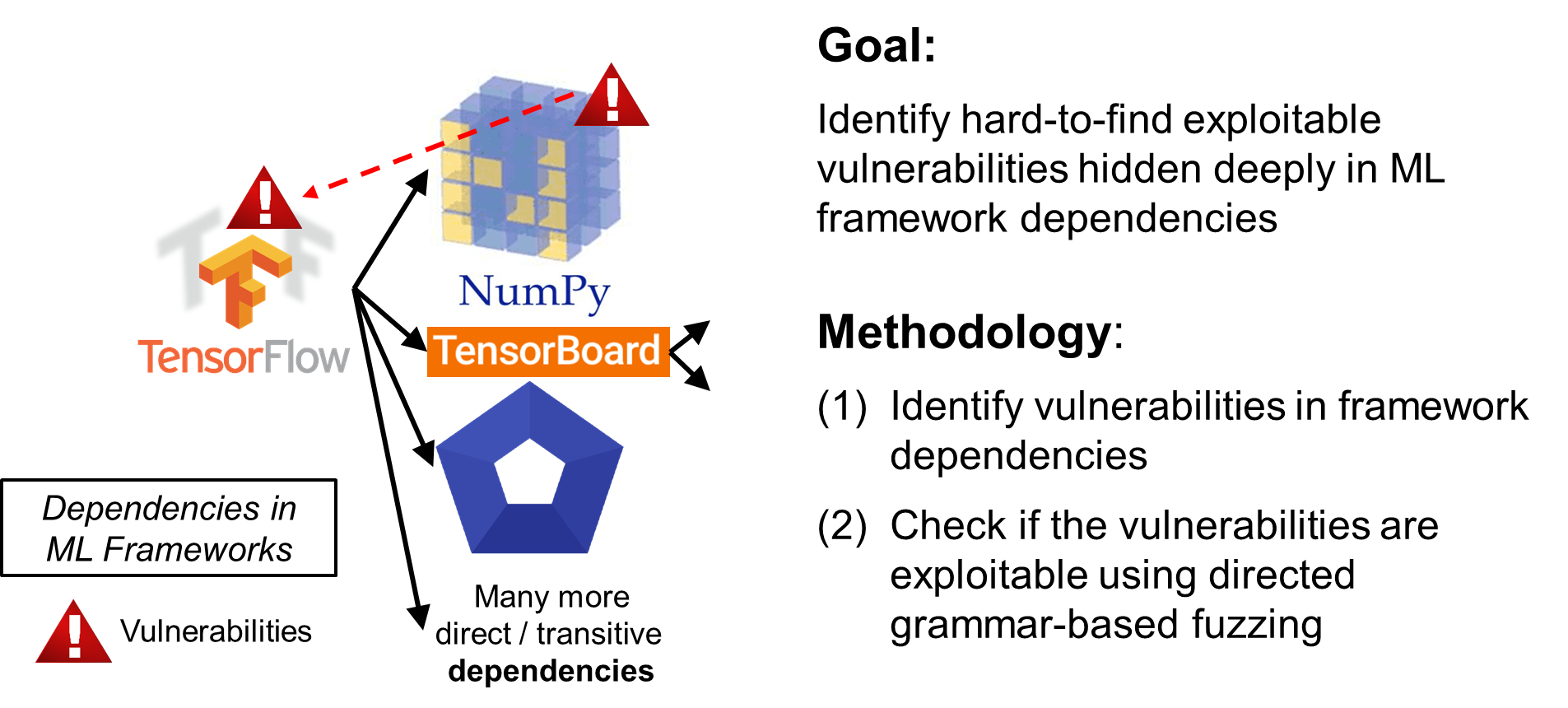

Smart systems are increasingly dependent on machine learning (ML) frameworks, e.g., TensorFlow, for their feature implementation. These frameworks are built on top of many third-party libraries, which depend on many others. Simply trusting and reusing a framework poses a security risk as the framework and its direct and transitive dependencies can contain exploitable vulnerabilities. To mitigate this risk, this project will create an advanced software composition analysis solution that scans dependency hierarchies and builds new deep learning architectures to analyse code and document repository data and flag vulnerabilities. Further, the flagged vulnerabilities will be verified if it can be reached via our new directed grammar-based fuzzing solution that generates valid test cases (following predefined grammars) and drives test executions to vulnerable code. Our solution targets vulnerabilities hidden deep in ML framework dependencies, which are hard for a classic fuzzer to uncover and for framework developers to recognize as they appear in third-party code.